diff --git a/deepdoc/README_tr.md b/deepdoc/README_tr.md

new file mode 100644

index 00000000000..434a4cce3ff

--- /dev/null

+++ b/deepdoc/README_tr.md

@@ -0,0 +1,136 @@

+[English](./README.md) | [简体中文](./README_zh.md) | Türkçe

+

+# *Deep*Doc

+

+- [*Deep*Doc](#deepdoc)

+ - [1. Giriş](#1-giriş)

+ - [2. Görsel İşleme](#2-görsel-i̇şleme)

+ - [3. Ayrıştırıcı](#3-ayrıştırıcı)

+ - [Özgeçmiş](#özgeçmiş)

+

+

+## 1. Giriş

+

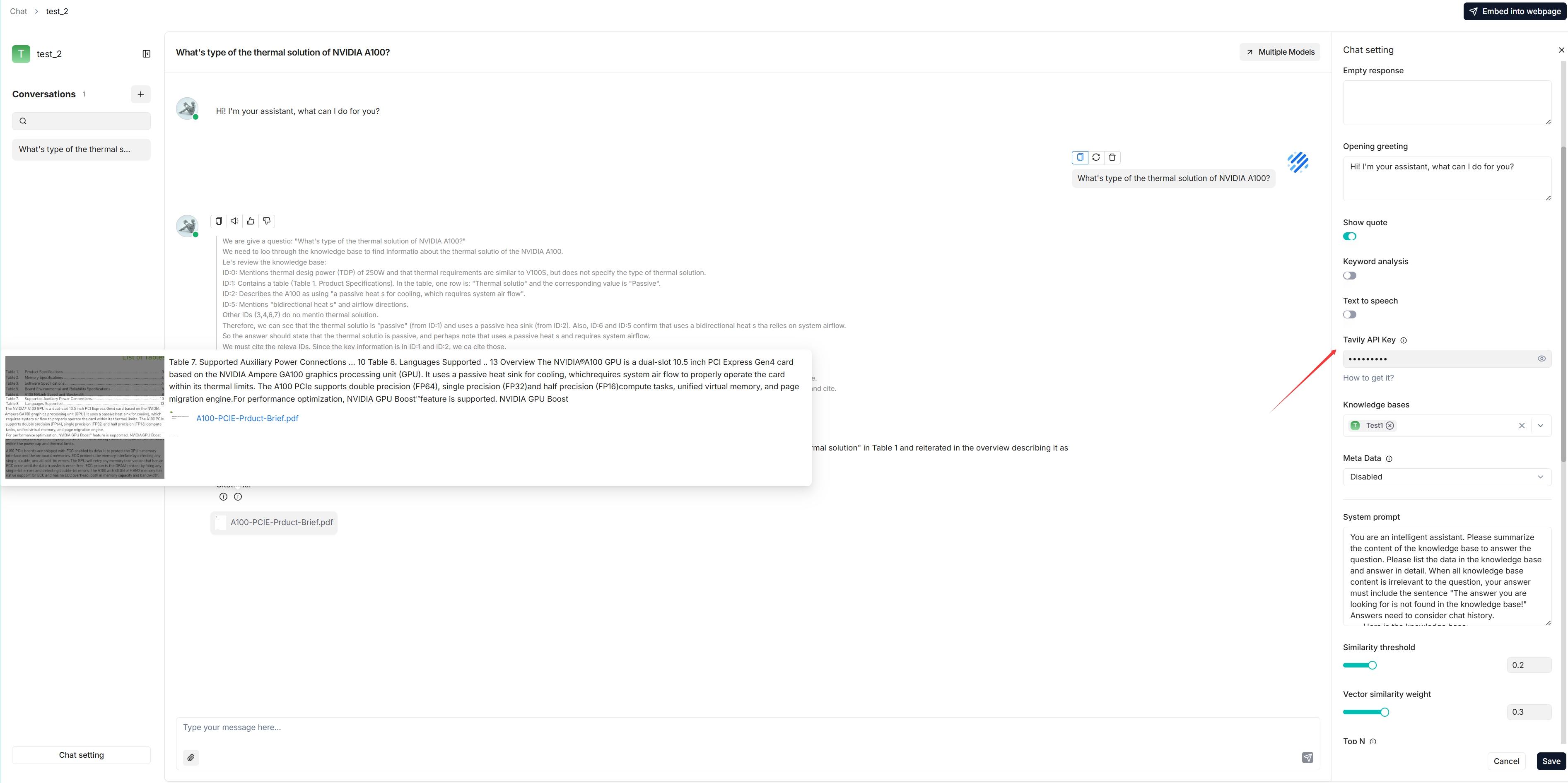

+Farklı alanlardan, farklı formatlarda ve farklı erişim gereksinimleriyle gelen çok sayıda doküman için doğru bir analiz son derece zorlu bir görev haline gelmektedir. *Deep*Doc tam bu amaç için doğmuştur. Şu ana kadar *Deep*Doc'ta iki bileşen bulunmaktadır: görsel işleme ve ayrıştırıcı. OCR, yerleşim tanıma ve TSR sonuçlarımızla ilgileniyorsanız aşağıdaki test programlarını çalıştırabilirsiniz.

+

+```bash

+python deepdoc/vision/t_ocr.py -h

+usage: t_ocr.py [-h] --inputs INPUTS [--output_dir OUTPUT_DIR]

+

+options:

+ -h, --help show this help message and exit

+ --inputs INPUTS Directory where to store images or PDFs, or a file path to a single image or PDF

+ --output_dir OUTPUT_DIR

+ Directory where to store the output images. Default: './ocr_outputs'

+```

+

+```bash

+python deepdoc/vision/t_recognizer.py -h

+usage: t_recognizer.py [-h] --inputs INPUTS [--output_dir OUTPUT_DIR] [--threshold THRESHOLD] [--mode {layout,tsr}]

+

+options:

+ -h, --help show this help message and exit

+ --inputs INPUTS Directory where to store images or PDFs, or a file path to a single image or PDF

+ --output_dir OUTPUT_DIR

+ Directory where to store the output images. Default: './layouts_outputs'

+ --threshold THRESHOLD

+ A threshold to filter out detections. Default: 0.5

+ --mode {layout,tsr} Task mode: layout recognition or table structure recognition

+```

+

+Modellerimiz HuggingFace üzerinden sunulmaktadır. HuggingFace modellerini indirmekte sorun yaşıyorsanız, bu yardımcı olabilir!

+

+```bash

+export HF_ENDPOINT=https://hf-mirror.com

+```

+

+

+## 2. Görsel İşleme

+

+İnsanlar olarak sorunları çözmek için görsel bilgiyi kullanırız.

+

+ - **OCR (Optik Karakter Tanıma)**. Birçok doküman görsel olarak sunulduğundan veya en azından görsele dönüştürülebildiğinden, OCR metin çıkarımı için çok temel, önemli ve hatta evrensel bir çözümdür.

+ ```bash

+ python deepdoc/vision/t_ocr.py --inputs=gorsel_veya_pdf_yolu --output_dir=sonuc_klasoru

+ ```

+ Girdi, görseller veya PDF'ler içeren bir dizin ya da tek bir görsel veya PDF dosyası olabilir.

+ Sonuçların konumlarını gösteren görsellerin ve OCR metnini içeren txt dosyalarının bulunduğu `sonuc_klasoru` klasörüne bakabilirsiniz.

+

+

+

+

+ - **Yerleşim Tanıma (Layout Recognition)**. Farklı alanlardan gelen dokümanlar farklı yerleşimlere sahip olabilir; gazete, dergi, kitap ve özgeçmiş gibi dokümanlar yerleşim açısından birbirinden farklıdır. Yalnızca makine doğru bir yerleşim analizi yapabildiğinde, metin parçalarının ardışık olup olmadığına, bu parçanın Tablo Yapısı Tanıma (TSR) ile mi işlenmesi gerektiğine veya bu parçanın bir şekil olup bu başlıkla mı açıklandığına karar verebilir.

+ Çoğu durumu kapsayan 10 temel yerleşim bileşenimiz vardır:

+ - Metin

+ - Başlık

+ - Şekil

+ - Şekil açıklaması

+ - Tablo

+ - Tablo açıklaması

+ - Üst bilgi

+ - Alt bilgi

+ - Referans

+ - Denklem

+

+ Yerleşim algılama sonuçlarını görmek için aşağıdaki komutu deneyin.

+ ```bash

+ python deepdoc/vision/t_recognizer.py --inputs=gorsel_veya_pdf_yolu --threshold=0.2 --mode=layout --output_dir=sonuc_klasoru

+ ```

+ Girdi, görseller veya PDF'ler içeren bir dizin ya da tek bir görsel veya PDF dosyası olabilir.

+ Aşağıdaki gibi algılama sonuçlarını gösteren görsellerin bulunduğu `sonuc_klasoru` klasörüne bakabilirsiniz:

+

+

+

+

+ - **TSR (Tablo Yapısı Tanıma)**. Veri tablosu, sayılar veya metin dahil verileri sunmak için sıklıkla kullanılan bir yapıdır. Bir tablonun yapısı; hiyerarşik başlıklar, birleştirilmiş hücreler ve yansıtılmış satır başlıkları gibi çok karmaşık olabilir. TSR'nin yanı sıra, içeriği LLM tarafından iyi anlaşılabilecek cümlelere dönüştürüyoruz.

+ TSR görevi için beş etiketimiz vardır:

+ - Sütun

+ - Satır

+ - Sütun başlığı

+ - Yansıtılmış satır başlığı

+ - Birleştirilmiş hücre

+

+ Algılama sonuçlarını görmek için aşağıdaki komutu deneyin.

+ ```bash

+ python deepdoc/vision/t_recognizer.py --inputs=gorsel_veya_pdf_yolu --threshold=0.2 --mode=tsr --output_dir=sonuc_klasoru

+ ```

+ Girdi, görseller veya PDF'ler içeren bir dizin ya da tek bir görsel veya PDF dosyası olabilir.

+ Algılama sonuçlarını gösteren görsellerin ve HTML sayfalarının bulunduğu `sonuc_klasoru` klasörüne bakabilirsiniz:

+

+

+

+

+ - **Tablo Otomatik Döndürme**. Tabloların yanlış yönde olabileceği (90°, 180° veya 270° döndürülmüş) taranmış PDF'ler için, PDF ayrıştırıcısı tablo yapısı tanıma işleminden önce en iyi döndürme açısını OCR güven puanlarını kullanarak otomatik olarak algılar. Bu, döndürülmüş tablolar için OCR doğruluğunu ve tablo yapısı algılamasını önemli ölçüde artırır.

+

+ Özellik 4 döndürme açısını (0°, 90°, 180°, 270°) değerlendirir ve en yüksek OCR güvenine sahip olanı seçer. En iyi yönlendirmeyi belirledikten sonra, doğru döndürülmüş tablo görseli üzerinde OCR'yi yeniden gerçekleştirir.

+

+ Bu özellik **varsayılan olarak etkindir**. Ortam değişkeni ile kontrol edebilirsiniz:

+ ```bash

+ # Tablo otomatik döndürmeyi devre dışı bırak

+ export TABLE_AUTO_ROTATE=false

+

+ # Tablo otomatik döndürmeyi etkinleştir (varsayılan)

+ export TABLE_AUTO_ROTATE=true

+ ```

+

+ Veya API parametresi ile:

+ ```python

+ from deepdoc.parser import PdfParser

+

+ parser = PdfParser()

+ # Bu çağrı için otomatik döndürmeyi devre dışı bırak

+ boxes, tables = parser(pdf_path, auto_rotate_tables=False)

+ ```

+

+

+## 3. Ayrıştırıcı

+

+PDF, DOCX, EXCEL ve PPT olmak üzere dört doküman formatının kendine özgü ayrıştırıcısı vardır. En karmaşık olanı, PDF'nin esnekliği nedeniyle PDF ayrıştırıcısıdır. PDF ayrıştırıcısının çıktısı şunları içerir:

+ - PDF'deki konumlarıyla birlikte metin parçaları (sayfa numarası ve dikdörtgen konumları).

+ - PDF'den kırpılmış görsel ve doğal dil cümlelerine çevrilmiş içerikleriyle tablolar.

+ - Açıklama ve şekil içindeki metinlerle birlikte şekiller.

+

+### Özgeçmiş

+

+Özgeçmiş çok karmaşık bir doküman türüdür. Çeşitli yerleşimlere sahip yapılandırılmamış metinden oluşan bir özgeçmiş, yaklaşık yüz alanı kapsayan yapılandırılmış veriye dönüştürülebilir.

+Ayrıştırıcıyı henüz açık kaynak olarak yayınlamadık; ayrıştırma prosedüründen sonraki işleme yöntemini açık kaynak olarak sunmaktayız.

diff --git a/deepdoc/parser/__init__.py b/deepdoc/parser/__init__.py

index 809a56edf70..a34b1de0f39 100644

--- a/deepdoc/parser/__init__.py

+++ b/deepdoc/parser/__init__.py

@@ -15,6 +15,7 @@

#

from .docx_parser import RAGFlowDocxParser as DocxParser

+from .epub_parser import RAGFlowEpubParser as EpubParser

from .excel_parser import RAGFlowExcelParser as ExcelParser

from .html_parser import RAGFlowHtmlParser as HtmlParser

from .json_parser import RAGFlowJsonParser as JsonParser

@@ -29,6 +30,7 @@

"PdfParser",

"PlainParser",

"DocxParser",

+ "EpubParser",

"ExcelParser",

"PptParser",

"HtmlParser",

@@ -37,4 +39,3 @@

"TxtParser",

"MarkdownElementExtractor",

]

-

diff --git a/deepdoc/parser/docling_parser.py b/deepdoc/parser/docling_parser.py

index e8df1cfd4ee..a2ebc400255 100644

--- a/deepdoc/parser/docling_parser.py

+++ b/deepdoc/parser/docling_parser.py

@@ -17,6 +17,8 @@

import logging

import re

+import base64

+import os

from dataclasses import dataclass

from enum import Enum

from io import BytesIO

@@ -25,6 +27,7 @@

from typing import Any, Callable, Iterable, Optional

import pdfplumber

+import requests

from PIL import Image

try:

@@ -38,6 +41,8 @@

class RAGFlowPdfParser:

pass

+from deepdoc.parser.utils import extract_pdf_outlines

+

class DoclingContentType(str, Enum):

IMAGE = "image"

@@ -55,16 +60,60 @@ class _BBox:

y1: float

+def _extract_bbox_from_prov(item, prov_attr: str = "prov") -> Optional[_BBox]:

+ prov = getattr(item, prov_attr, None)

+ if not prov:

+ return None

+

+ prov_item = prov[0] if isinstance(prov, list) else prov

+ pn = getattr(prov_item, "page_no", None)

+ bb = getattr(prov_item, "bbox", None)

+ if pn is None or bb is None:

+ return None

+

+ coords = [getattr(bb, attr) for attr in ("l", "t", "r", "b")]

+ if None in coords:

+ return None

+

+ return _BBox(page_no=int(pn), x0=coords[0], y0=coords[1], x1=coords[2], y1=coords[3])

+

+

class DoclingParser(RAGFlowPdfParser):

- def __init__(self):

+ def __init__(self, docling_server_url: str = "", request_timeout: int = 600):

self.logger = logging.getLogger(self.__class__.__name__)

self.page_images: list[Image.Image] = []

self.page_from = 0

self.page_to = 10_000

self.outlines = []

-

-

- def check_installation(self) -> bool:

+ self.docling_server_url = (docling_server_url or "").rstrip("/")

+ self.request_timeout = request_timeout

+

+ def _effective_server_url(self, docling_server_url: Optional[str] = None) -> str:

+ return (docling_server_url or self.docling_server_url or "").rstrip("/") or (

+ os.environ.get("DOCLING_SERVER_URL", "").rstrip("/")

+ )

+

+ @staticmethod

+ def _is_http_endpoint_valid(url: str, timeout: int = 5) -> bool:

+ try:

+ response = requests.head(url, timeout=timeout, allow_redirects=True)

+ return response.status_code in [200, 301, 302, 307, 308]

+ except Exception:

+ try:

+ response = requests.get(url, timeout=timeout, allow_redirects=True)

+ return response.status_code in [200, 301, 302, 307, 308]

+ except Exception:

+ return False

+

+ def check_installation(self, docling_server_url: Optional[str] = None) -> bool:

+ server_url = self._effective_server_url(docling_server_url)

+ if server_url:

+ for path in ("/openapi.json", "/docs", "/v1/convert/source"):

+ if self._is_http_endpoint_valid(f"{server_url}{path}", timeout=5):

+ return True

+ self.logger.warning(f"[Docling] external server not reachable: {server_url}")

+ return False

+

if DocumentConverter is None:

self.logger.warning("[Docling] 'docling' is not importable, please: pip install docling")

return False

@@ -168,34 +217,22 @@ def crop(self, text: str, ZM: int = 1, need_position: bool = False):

def _iter_doc_items(self, doc) -> Iterable[tuple[str, Any, Optional[_BBox]]]:

for t in getattr(doc, "texts", []):

- parent=getattr(t, "parent", "")

- ref=getattr(parent,"cref","")

- label=getattr(t, "label", "")

- if (label in ("section_header","text",) and ref in ("#/body",)) or label in ("list_item",):

+ parent = getattr(t, "parent", "")

+ ref = getattr(parent, "cref", "")

+ label = getattr(t, "label", "")

+ if (label in ("section_header", "text") and ref in ("#/body",)) or label in ("list_item",):

text = getattr(t, "text", "") or ""

- bbox = None

- if getattr(t, "prov", None):

- pn = getattr(t.prov[0], "page_no", None)

- bb = getattr(t.prov[0], "bbox", None)

- bb = [getattr(bb, "l", None),getattr(bb, "t", None),getattr(bb, "r", None),getattr(bb, "b", None)]

- if pn and bb and len(bb) == 4:

- bbox = _BBox(page_no=int(pn), x0=bb[0], y0=bb[1], x1=bb[2], y1=bb[3])

+ bbox = _extract_bbox_from_prov(t)

yield (DoclingContentType.TEXT.value, text, bbox)

for item in getattr(doc, "texts", []):

if getattr(item, "label", "") in ("FORMULA",):

text = getattr(item, "text", "") or ""

- bbox = None

- if getattr(item, "prov", None):

- pn = getattr(item.prov, "page_no", None)

- bb = getattr(item.prov, "bbox", None)

- bb = [getattr(bb, "l", None),getattr(bb, "t", None),getattr(bb, "r", None),getattr(bb, "b", None)]

- if pn and bb and len(bb) == 4:

- bbox = _BBox(int(pn), bb[0], bb[1], bb[2], bb[3])

+ bbox = _extract_bbox_from_prov(item)

yield (DoclingContentType.EQUATION.value, text, bbox)

- def _transfer_to_sections(self, doc, parse_method: str) -> list[tuple[str, str]]:

- sections: list[tuple[str, str]] = []

+ def _transfer_to_sections(self, doc, parse_method: str) -> list[tuple[str, ...]]:

+ sections: list[tuple[str, ...]] = []

for typ, payload, bbox in self._iter_doc_items(doc):

if typ == DoclingContentType.TEXT.value:

section = payload.strip()

@@ -207,7 +244,7 @@ def _transfer_to_sections(self, doc, parse_method: str) -> list[tuple[str, str]]

continue

tag = self._make_line_tag(bbox) if isinstance(bbox,_BBox) else ""

- if parse_method == "manual":

+ if parse_method in {"manual", "pipeline"}:

sections.append((section, typ, tag))

elif parse_method == "paper":

sections.append((section + tag, typ))

@@ -248,16 +285,9 @@ def _transfer_to_tables(self, doc):

for tab in getattr(doc, "tables", []):

img = None

positions = ""

- if getattr(tab, "prov", None):

- pn = getattr(tab.prov[0], "page_no", None)

- bb = getattr(tab.prov[0], "bbox", None)

- if pn is not None and bb is not None:

- left = getattr(bb, "l", None)

- top = getattr(bb, "t", None)

- right = getattr(bb, "r", None)

- bott = getattr(bb, "b", None)

- if None not in (left, top, right, bott):

- img, positions = self.cropout_docling_table(int(pn), (float(left), float(top), float(right), float(bott)))

+ bbox = _extract_bbox_from_prov(tab)

+ if bbox:

+ img, positions = self.cropout_docling_table(bbox.page_no, (bbox.x0, bbox.y0, bbox.x1, bbox.y1))

html = ""

try:

html = tab.export_to_html(doc=doc)

@@ -267,16 +297,9 @@ def _transfer_to_tables(self, doc):

for pic in getattr(doc, "pictures", []):

img = None

positions = ""

- if getattr(pic, "prov", None):

- pn = getattr(pic.prov[0], "page_no", None)

- bb = getattr(pic.prov[0], "bbox", None)

- if pn is not None and bb is not None:

- left = getattr(bb, "l", None)

- top = getattr(bb, "t", None)

- right = getattr(bb, "r", None)

- bott = getattr(bb, "b", None)

- if None not in (left, top, right, bott):

- img, positions = self.cropout_docling_table(int(pn), (float(left), float(top), float(right), float(bott)))

+ bbox = _extract_bbox_from_prov(pic)

+ if bbox:

+ img, positions = self.cropout_docling_table(bbox.page_no, (bbox.x0, bbox.y0, bbox.x1, bbox.y1))

captions = ""

try:

captions = pic.caption_text(doc=doc)

@@ -285,6 +308,141 @@ def _transfer_to_tables(self, doc):

tables.append(((img, [captions]), positions if positions else ""))

return tables

+ @staticmethod

+ def _sections_from_remote_text(text: str, parse_method: str) -> list[tuple[str, ...]]:

+ txt = (text or "").strip()

+ if not txt:

+ return []

+ if parse_method in {"manual", "pipeline"}:

+ return [(txt, DoclingContentType.TEXT.value, "")]

+ if parse_method == "paper":

+ return [(txt, DoclingContentType.TEXT.value)]

+ return [(txt, "")]

+

+ @staticmethod

+ def _extract_remote_document_entries(payload: Any) -> list[dict[str, Any]]:

+ if not isinstance(payload, dict):

+ return []

+ if isinstance(payload.get("document"), dict):

+ return [payload["document"]]

+ if isinstance(payload.get("documents"), list):

+ return [d for d in payload["documents"] if isinstance(d, dict)]

+ if isinstance(payload.get("results"), list):

+ docs = []

+ for it in payload["results"]:

+ if isinstance(it, dict):

+ if isinstance(it.get("document"), dict):

+ docs.append(it["document"])

+ elif isinstance(it.get("result"), dict):

+ docs.append(it["result"])

+ else:

+ docs.append(it)

+ return docs

+ return []

+

+ def _parse_pdf_remote(

+ self,

+ filepath: str | PathLike[str],

+ binary: BytesIO | bytes | None = None,

+ callback: Optional[Callable] = None,

+ *,

+ parse_method: str = "raw",

+ docling_server_url: Optional[str] = None,

+ request_timeout: Optional[int] = None,

+ ):

+ server_url = self._effective_server_url(docling_server_url)

+ if not server_url:

+ raise RuntimeError("[Docling] DOCLING_SERVER_URL is not configured.")

+

+ timeout = request_timeout or self.request_timeout

+ if binary is not None:

+ if isinstance(binary, (bytes, bytearray)):

+ pdf_bytes = bytes(binary)

+ else:

+ pdf_bytes = bytes(binary.getbuffer())

+ else:

+ src_path = Path(filepath)

+ if not src_path.exists():

+ raise FileNotFoundError(f"PDF not found: {src_path}")

+ with open(src_path, "rb") as f:

+ pdf_bytes = f.read()

+

+ if callback:

+ callback(0.2, f"[Docling] Requesting external server: {server_url}")

+

+ filename = Path(filepath).name or "input.pdf"

+ b64 = base64.b64encode(pdf_bytes).decode("ascii")

+ v1_payload = {

+ "options": {

+ "from_formats": ["pdf"],

+ "to_formats": ["json", "md", "text"],

+ },

+ "sources": [

+ {

+ "kind": "file",

+ "filename": filename,

+ "base64_string": b64,

+ }

+ ],

+ }

+ v1alpha_payload = {

+ "options": {

+ "from_formats": ["pdf"],

+ "to_formats": ["json", "md", "text"],

+ },

+ "file_sources": [

+ {

+ "filename": filename,

+ "base64_string": b64,

+ }

+ ],

+ }

+ errors = []

+ response_json = None

+ for endpoint, payload in (

+ ("/v1/convert/source", v1_payload),

+ ("/v1alpha/convert/source", v1alpha_payload),

+ ):

+ try:

+ resp = requests.post(

+ f"{server_url}{endpoint}",

+ json=payload,

+ timeout=timeout,

+ )

+ if resp.status_code < 300:

+ response_json = resp.json()

+ break

+ errors.append(f"{endpoint}: HTTP {resp.status_code} {resp.text[:300]}")

+ except Exception as exc:

+ errors.append(f"{endpoint}: {exc}")

+

+ if response_json is None:

+ raise RuntimeError("[Docling] remote convert failed: " + " | ".join(errors))

+

+ docs = self._extract_remote_document_entries(response_json)

+ if not docs:

+ raise RuntimeError("[Docling] remote response does not contain parsed documents.")

+

+ sections: list[tuple[str, ...]] = []

+ tables = []

+ for doc in docs:

+ md = doc.get("md_content")

+ txt = doc.get("text_content")

+ if isinstance(md, str) and md.strip():

+ sections.extend(self._sections_from_remote_text(md, parse_method=parse_method))

+ elif isinstance(txt, str) and txt.strip():

+ sections.extend(self._sections_from_remote_text(txt, parse_method=parse_method))

+

+ json_content = doc.get("json_content")

+ if isinstance(json_content, dict):

+ md_fallback = json_content.get("md_content")

+ if isinstance(md_fallback, str) and md_fallback.strip() and not sections:

+ sections.extend(self._sections_from_remote_text(md_fallback, parse_method=parse_method))

+

+ if callback:

+ callback(0.95, f"[Docling] Remote sections: {len(sections)}")

+ return sections, tables

+

def parse_pdf(

self,

filepath: str | PathLike[str],

@@ -295,12 +453,26 @@ def parse_pdf(

lang: Optional[str] = None,

method: str = "auto",

delete_output: bool = True,

- parse_method: str = "raw"

+ parse_method: str = "raw",

+ docling_server_url: Optional[str] = None,

+ request_timeout: Optional[int] = None,

):

+ self.outlines = extract_pdf_outlines(binary if binary is not None else filepath)

- if not self.check_installation():

+ if not self.check_installation(docling_server_url=docling_server_url):

raise RuntimeError("Docling not available, please install `docling`")

+ server_url = self._effective_server_url(docling_server_url)

+ if server_url:

+ return self._parse_pdf_remote(

+ filepath=filepath,

+ binary=binary,

+ callback=callback,

+ parse_method=parse_method,

+ docling_server_url=server_url,

+ request_timeout=request_timeout,

+ )

+

if binary is not None:

tmpdir = Path(output_dir) if output_dir else Path.cwd() / ".docling_tmp"

tmpdir.mkdir(parents=True, exist_ok=True)

diff --git a/deepdoc/parser/docx_parser.py b/deepdoc/parser/docx_parser.py

index 2a65841e246..0257a320f7f 100644

--- a/deepdoc/parser/docx_parser.py

+++ b/deepdoc/parser/docx_parser.py

@@ -20,9 +20,54 @@

from collections import Counter

from rag.nlp import rag_tokenizer

from io import BytesIO

-

+import logging

+from docx.image.exceptions import (

+ InvalidImageStreamError,

+ UnexpectedEndOfFileError,

+ UnrecognizedImageError,

+)

+from rag.utils.lazy_image import LazyImage

class RAGFlowDocxParser:

+ def get_picture(self, document, paragraph):

+ imgs = paragraph._element.xpath(".//pic:pic")

+ if not imgs:

+ return None

+ image_blobs = []

+ for img in imgs:

+ embed = img.xpath(".//a:blip/@r:embed")

+ if not embed:

+ continue

+ embed = embed[0]

+ image_blob = None

+ try:

+ related_part = document.part.related_parts[embed]

+ except Exception as e:

+ logging.warning(f"Skipping image due to unexpected error getting related_part: {e}")

+ continue

+

+ try:

+ image = related_part.image

+ if image is not None:

+ image_blob = image.blob

+ except (

+ UnrecognizedImageError,

+ UnexpectedEndOfFileError,

+ InvalidImageStreamError,

+ UnicodeDecodeError,

+ ) as e:

+ logging.info(f"Damaged image encountered, attempting blob fallback: {e}")

+ except Exception as e:

+ logging.warning(f"Unexpected error getting image, attempting blob fallback: {e}")

+

+ if image_blob is None:

+ image_blob = getattr(related_part, "blob", None)

+ if image_blob:

+ image_blobs.append(image_blob)

+ if not image_blobs:

+ return None

+ return LazyImage(image_blobs)

+

def __extract_table_content(self, tb):

df = []

diff --git a/deepdoc/parser/epub_parser.py b/deepdoc/parser/epub_parser.py

new file mode 100644

index 00000000000..5badd7c33b6

--- /dev/null

+++ b/deepdoc/parser/epub_parser.py

@@ -0,0 +1,145 @@

+#

+# Copyright 2025 The InfiniFlow Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+import logging

+import warnings

+import zipfile

+from io import BytesIO

+from xml.etree import ElementTree

+

+from .html_parser import RAGFlowHtmlParser

+

+# OPF XML namespaces

+_OPF_NS = "http://www.idpf.org/2007/opf"

+_CONTAINER_NS = "urn:oasis:names:tc:opendocument:xmlns:container"

+

+# Media types that contain readable XHTML content

+_XHTML_MEDIA_TYPES = {"application/xhtml+xml", "text/html", "text/xml"}

+

+logger = logging.getLogger(__name__)

+

+

+class RAGFlowEpubParser:

+ """Parse EPUB files by extracting XHTML content in spine (reading) order

+ and delegating to RAGFlowHtmlParser for chunking."""

+

+ def __call__(self, fnm, binary=None, chunk_token_num=512):

+ if binary is not None:

+ if not binary:

+ logger.warning(

+ "RAGFlowEpubParser received an empty EPUB binary payload for %r",

+ fnm,

+ )

+ raise ValueError("Empty EPUB binary payload")

+ zf = zipfile.ZipFile(BytesIO(binary))

+ else:

+ zf = zipfile.ZipFile(fnm)

+

+ try:

+ content_items = self._get_spine_items(zf)

+ all_sections = []

+ html_parser = RAGFlowHtmlParser()

+

+ for item_path in content_items:

+ try:

+ html_bytes = zf.read(item_path)

+ except KeyError:

+ continue

+ if not html_bytes:

+ logger.debug("Skipping empty EPUB content item: %s", item_path)

+ continue

+ with warnings.catch_warnings():

+ warnings.filterwarnings("ignore", category=UserWarning)

+ sections = html_parser(

+ item_path, binary=html_bytes, chunk_token_num=chunk_token_num

+ )

+ all_sections.extend(sections)

+

+ return all_sections

+ finally:

+ zf.close()

+

+ @staticmethod

+ def _get_spine_items(zf):

+ """Return content file paths in spine (reading) order."""

+ # 1. Find the OPF file path from META-INF/container.xml

+ try:

+ container_xml = zf.read("META-INF/container.xml")

+ except KeyError:

+ return RAGFlowEpubParser._fallback_xhtml_order(zf)

+

+ try:

+ container_root = ElementTree.fromstring(container_xml)

+ except ElementTree.ParseError:

+ logger.warning("Failed to parse META-INF/container.xml; falling back to XHTML order.")

+ return RAGFlowEpubParser._fallback_xhtml_order(zf)

+

+ rootfile_el = container_root.find(f".//{{{_CONTAINER_NS}}}rootfile")

+ if rootfile_el is None:

+ return RAGFlowEpubParser._fallback_xhtml_order(zf)

+

+ opf_path = rootfile_el.get("full-path", "")

+ if not opf_path:

+ return RAGFlowEpubParser._fallback_xhtml_order(zf)

+

+ # Base directory of the OPF file (content paths are relative to it)

+ opf_dir = opf_path.rsplit("/", 1)[0] + "/" if "/" in opf_path else ""

+

+ # 2. Parse the OPF file

+ try:

+ opf_xml = zf.read(opf_path)

+ except KeyError:

+ return RAGFlowEpubParser._fallback_xhtml_order(zf)

+

+ try:

+ opf_root = ElementTree.fromstring(opf_xml)

+ except ElementTree.ParseError:

+ logger.warning("Failed to parse OPF file '%s'; falling back to XHTML order.", opf_path)

+ return RAGFlowEpubParser._fallback_xhtml_order(zf)

+

+ # 3. Build id->href+mediatype map from

+ manifest = {}

+ for item in opf_root.findall(f".//{{{_OPF_NS}}}item"):

+ item_id = item.get("id", "")

+ href = item.get("href", "")

+ media_type = item.get("media-type", "")

+ if item_id and href:

+ manifest[item_id] = (href, media_type)

+

+ # 4. Walk to get reading order

+ spine_items = []

+ for itemref in opf_root.findall(f".//{{{_OPF_NS}}}itemref"):

+ idref = itemref.get("idref", "")

+ if idref not in manifest:

+ continue

+ href, media_type = manifest[idref]

+ if media_type not in _XHTML_MEDIA_TYPES:

+ continue

+ spine_items.append(opf_dir + href)

+

+ return (

+ spine_items if spine_items else RAGFlowEpubParser._fallback_xhtml_order(zf)

+ )

+

+ @staticmethod

+ def _fallback_xhtml_order(zf):

+ """Fallback: return all .xhtml/.html files sorted alphabetically."""

+ return sorted(

+ n

+ for n in zf.namelist()

+ if n.lower().endswith((".xhtml", ".html", ".htm"))

+ and not n.startswith("META-INF/")

+ )

diff --git a/deepdoc/parser/excel_parser.py b/deepdoc/parser/excel_parser.py

index 2fe3420192c..acbd98f228a 100644

--- a/deepdoc/parser/excel_parser.py

+++ b/deepdoc/parser/excel_parser.py

@@ -18,9 +18,9 @@

import pandas as pd

from openpyxl import Workbook, load_workbook

-from PIL import Image

from rag.nlp import find_codec

+from rag.utils.lazy_image import LazyImage

# copied from `/openpyxl/cell/cell.py`

ILLEGAL_CHARACTERS_RE = re.compile(r"[\000-\010]|[\013-\014]|[\016-\037]")

@@ -74,9 +74,16 @@ def clean_string(s):

return df.apply(lambda col: col.map(clean_string))

+ @staticmethod

+ def _fill_worksheet_from_dataframe(ws, df: pd.DataFrame):

+ for col_num, column_name in enumerate(df.columns, 1):

+ ws.cell(row=1, column=col_num, value=column_name)

+ for row_num, row in enumerate(df.values, 2):

+ for col_num, value in enumerate(row, 1):

+ ws.cell(row=row_num, column=col_num, value=value)

+

@staticmethod

def _dataframe_to_workbook(df):

- # if contains multiple sheets use _dataframes_to_workbook

if isinstance(df, dict) and len(df) > 1:

return RAGFlowExcelParser._dataframes_to_workbook(df)

@@ -84,30 +91,19 @@ def _dataframe_to_workbook(df):

wb = Workbook()

ws = wb.active

ws.title = "Data"

-

- for col_num, column_name in enumerate(df.columns, 1):

- ws.cell(row=1, column=col_num, value=column_name)

-

- for row_num, row in enumerate(df.values, 2):

- for col_num, value in enumerate(row, 1):

- ws.cell(row=row_num, column=col_num, value=value)

-

+ RAGFlowExcelParser._fill_worksheet_from_dataframe(ws, df)

return wb

-

+

@staticmethod

def _dataframes_to_workbook(dfs: dict):

wb = Workbook()

default_sheet = wb.active

wb.remove(default_sheet)

-

+

for sheet_name, df in dfs.items():

df = RAGFlowExcelParser._clean_dataframe(df)

ws = wb.create_sheet(title=sheet_name)

- for col_num, column_name in enumerate(df.columns, 1):

- ws.cell(row=1, column=col_num, value=column_name)

- for row_num, row in enumerate(df.values, 2):

- for col_num, value in enumerate(row, 1):

- ws.cell(row=row_num, column=col_num, value=value)

+ RAGFlowExcelParser._fill_worksheet_from_dataframe(ws, df)

return wb

@staticmethod

@@ -126,7 +122,7 @@ def _extract_images_from_worksheet(ws, sheetname=None):

for img in images:

try:

img_bytes = img._data()

- pil_img = Image.open(BytesIO(img_bytes)).convert("RGB")

+ lazy_img = LazyImage([img_bytes])

anchor = img.anchor

if hasattr(anchor, "_from") and hasattr(anchor, "_to"):

@@ -143,7 +139,7 @@ def _extract_images_from_worksheet(ws, sheetname=None):

item = {

"sheet": sheetname or ws.title,

- "image": pil_img,

+ "image": lazy_img,

"image_description": "",

"row_from": r1,

"col_from": c1,

diff --git a/deepdoc/parser/figure_parser.py b/deepdoc/parser/figure_parser.py

index ec5e333de28..e062f462538 100644

--- a/deepdoc/parser/figure_parser.py

+++ b/deepdoc/parser/figure_parser.py

@@ -20,29 +20,36 @@

from common.constants import LLMType

from api.db.services.llm_service import LLMBundle

+from api.db.joint_services.tenant_model_service import get_tenant_default_model_by_type

from common.connection_utils import timeout

from rag.app.picture import vision_llm_chunk as picture_vision_llm_chunk

from rag.prompts.generator import vision_llm_figure_describe_prompt, vision_llm_figure_describe_prompt_with_context

from rag.nlp import append_context2table_image4pdf

+from rag.utils.lazy_image import ensure_pil_image, open_image_for_processing, is_image_like

# need to delete before pr

def vision_figure_parser_figure_data_wrapper(figures_data_without_positions):

if not figures_data_without_positions:

return []

- return [

- (

- (figure_data[1], [figure_data[0]]),

- [(0, 0, 0, 0, 0)],

+ res = []

+ for figure_data in figures_data_without_positions:

+ img = ensure_pil_image(figure_data[1])

+ if not isinstance(img, Image.Image):

+ continue

+ res.append(

+ (

+ (img, [figure_data[0]]),

+ [(0, 0, 0, 0, 0)],

+ )

)

- for figure_data in figures_data_without_positions

- if isinstance(figure_data[1], Image.Image)

- ]

+ return res

def vision_figure_parser_docx_wrapper(sections, tbls, callback=None,**kwargs):

if not sections:

return tbls

try:

- vision_model = LLMBundle(kwargs["tenant_id"], LLMType.IMAGE2TEXT)

+ vision_model_config = get_tenant_default_model_by_type(kwargs["tenant_id"], LLMType.IMAGE2TEXT)

+ vision_model = LLMBundle(kwargs["tenant_id"], vision_model_config)

callback(0.7, "Visual model detected. Attempting to enhance figure extraction...")

except Exception:

vision_model = None

@@ -61,13 +68,14 @@ def vision_figure_parser_figure_xlsx_wrapper(images,callback=None, **kwargs):

if not images:

return []

try:

- vision_model = LLMBundle(kwargs["tenant_id"], LLMType.IMAGE2TEXT)

+ vision_model_config = get_tenant_default_model_by_type(kwargs["tenant_id"], LLMType.IMAGE2TEXT)

+ vision_model = LLMBundle(kwargs["tenant_id"], vision_model_config)

callback(0.2, "Visual model detected. Attempting to enhance Excel image extraction...")

except Exception:

vision_model = None

if vision_model:

figures_data = [((

- img["image"], # Image.Image

+ img["image"], # Image.Image or LazyImage (converted by ensure_pil_image)

[img["image_description"]] # description list (must be list)

),

[

@@ -89,14 +97,15 @@ def vision_figure_parser_pdf_wrapper(tbls, callback=None, **kwargs):

parser_config = kwargs.get("parser_config", {})

context_size = max(0, int(parser_config.get("image_context_size", 0) or 0))

try:

- vision_model = LLMBundle(kwargs["tenant_id"], LLMType.IMAGE2TEXT)

+ vision_model_config = get_tenant_default_model_by_type(kwargs["tenant_id"], LLMType.IMAGE2TEXT)

+ vision_model = LLMBundle(kwargs["tenant_id"], vision_model_config)

callback(0.7, "Visual model detected. Attempting to enhance figure extraction...")

except Exception:

vision_model = None

if vision_model:

def is_figure_item(item):

- return isinstance(item[0][0], Image.Image) and isinstance(item[0][1], list)

+ return is_image_like(item[0][0]) and isinstance(item[0][1], list)

figures_data = [item for item in tbls if is_figure_item(item)]

figure_contexts = []

@@ -127,13 +136,17 @@ def vision_figure_parser_docx_wrapper_naive(chunks, idx_lst, callback=None, **kw

if not chunks:

return []

try:

- vision_model = LLMBundle(kwargs["tenant_id"], LLMType.IMAGE2TEXT)

+ vision_model_config = get_tenant_default_model_by_type(kwargs["tenant_id"], LLMType.IMAGE2TEXT)

+ vision_model = LLMBundle(kwargs["tenant_id"], vision_model_config)

callback(0.7, "Visual model detected. Attempting to enhance figure extraction...")

except Exception:

vision_model = None

if vision_model:

@timeout(30, 3)

def worker(idx, ck):

+ img, close_after = open_image_for_processing(ck.get("image"), allow_bytes=True)

+ if not isinstance(img, Image.Image):

+ return idx, ""

context_above = ck.get("context_above", "")

context_below = ck.get("context_below", "")

if context_above or context_below:

@@ -149,13 +162,20 @@ def worker(idx, ck):

prompt = vision_llm_figure_describe_prompt()

logging.info(f"[VisionFigureParser] figure={idx} context_len=0 prompt=default")

- description_text = picture_vision_llm_chunk(

- binary=ck.get("image"),

- vision_model=vision_model,

- prompt=prompt,

- callback=callback,

- )

- return idx, description_text

+ try:

+ description_text = picture_vision_llm_chunk(

+ binary=img,

+ vision_model=vision_model,

+ prompt=prompt,

+ callback=callback,

+ )

+ return idx, description_text

+ finally:

+ if close_after and isinstance(img, Image.Image):

+ try:

+ img.close()

+ except Exception:

+ pass

with ThreadPoolExecutor(max_workers=10) as executor:

futures = [

@@ -187,13 +207,19 @@ def _extract_figures_info(self, figures_data):

# position

if len(item) == 2 and isinstance(item[0], tuple) and len(item[0]) == 2 and isinstance(item[1], list) and isinstance(item[1][0], tuple) and len(item[1][0]) == 5:

img_desc = item[0]

- assert len(img_desc) == 2 and isinstance(img_desc[0], Image.Image) and isinstance(img_desc[1], list), "Should be (figure, [description])"

- self.figures.append(img_desc[0])

+ img = ensure_pil_image(img_desc[0])

+ if img is None:

+ continue

+ assert len(img_desc) == 2 and isinstance(img_desc[1], list), "Should be (figure, [description])"

+ self.figures.append(img)

self.descriptions.append(img_desc[1])

self.positions.append(item[1])

else:

- assert len(item) == 2 and isinstance(item[0], Image.Image) and isinstance(item[1], list), f"Unexpected form of figure data: get {len(item)=}, {item=}"

- self.figures.append(item[0])

+ img = ensure_pil_image(item[0])

+ if img is None:

+ continue

+ assert len(item) == 2 and isinstance(item[1], list), f"Unexpected form of figure data: get {len(item)=}, {item=}"

+ self.figures.append(img)

self.descriptions.append(item[1])

def _assemble(self):

diff --git a/deepdoc/parser/html_parser.py b/deepdoc/parser/html_parser.py

index dcf33a8bbd1..f4d360c6413 100644

--- a/deepdoc/parser/html_parser.py

+++ b/deepdoc/parser/html_parser.py

@@ -33,7 +33,7 @@ def get_encoding(file):

"table", "pre", "code", "blockquote",

"figure", "figcaption"

]

-TITLE_TAGS = {"h1": "#", "h2": "##", "h3": "###", "h4": "#####", "h5": "#####", "h6": "######"}

+TITLE_TAGS = {"h1": "#", "h2": "##", "h3": "###", "h4": "####", "h5": "#####", "h6": "######"}

class RAGFlowHtmlParser:

diff --git a/deepdoc/parser/markdown_parser.py b/deepdoc/parser/markdown_parser.py

index 900ef525ccf..e911a22ac8e 100644

--- a/deepdoc/parser/markdown_parser.py

+++ b/deepdoc/parser/markdown_parser.py

@@ -56,7 +56,7 @@ def replace_tables_with_rendered_html(pattern, table_list, render=True):

""",

re.VERBOSE,

)

- working_text = replace_tables_with_rendered_html(border_table_pattern, tables)

+ working_text = replace_tables_with_rendered_html(border_table_pattern, tables, render=separate_tables)

# Borderless Markdown table

no_border_table_pattern = re.compile(

@@ -68,7 +68,7 @@ def replace_tables_with_rendered_html(pattern, table_list, render=True):

""",

re.VERBOSE,

)

- working_text = replace_tables_with_rendered_html(no_border_table_pattern, tables)

+ working_text = replace_tables_with_rendered_html(no_border_table_pattern, tables, render=separate_tables)

# Replace any TAGS e.g. to

TAGS = ["table", "td", "tr", "th", "tbody", "thead", "div"]

diff --git a/deepdoc/parser/mineru_parser.py b/deepdoc/parser/mineru_parser.py

index cc4c99c76b8..25a0627ff41 100644

--- a/deepdoc/parser/mineru_parser.py

+++ b/deepdoc/parser/mineru_parser.py

@@ -35,6 +35,7 @@

from strenum import StrEnum

from deepdoc.parser.pdf_parser import RAGFlowPdfParser

+from deepdoc.parser.utils import extract_pdf_outlines

LOCK_KEY_pdfplumber = "global_shared_lock_pdfplumber"

if LOCK_KEY_pdfplumber not in sys.modules:

@@ -73,6 +74,8 @@ class MinerUContentType(StrEnum):

'Thai': 'th',

'Greek': 'el',

'Hindi': 'devanagari',

+ 'Bulgarian': 'cyrillic',

+ 'Turkish': 'latin',

}

@@ -339,6 +342,11 @@ def _line_tag(self, bx):

pn = [bx["page_idx"] + 1]

positions = bx.get("bbox", (0, 0, 0, 0))

x0, top, x1, bott = positions

+ # Normalize flipped coordinates (MinerU may report inverted bbox for flipped images)

+ if x0 > x1:

+ x0, x1 = x1, x0

+ if top > bott:

+ top, bott = bott, top

if hasattr(self, "page_images") and self.page_images and len(self.page_images) > bx["page_idx"]:

page_width, page_height = self.page_images[bx["page_idx"]].size

@@ -428,6 +436,12 @@ def crop(self, text, ZM=1, need_position=False):

img0 = self.page_images[pns[0]]

x0, y0, x1, y1 = int(left), int(top), int(right), int(min(bottom, img0.size[1]))

+ if x0 > x1:

+ x0, x1 = x1, x0

+ if y0 > y1:

+ y0, y1 = y1, y0

+ if x1 <= x0 or y1 <= y0:

+ continue

crop0 = img0.crop((x0, y0, x1, y1))

imgs.append(crop0)

if 0 < ii < len(poss) - 1:

@@ -441,6 +455,13 @@ def crop(self, text, ZM=1, need_position=False):

continue

page = self.page_images[pn]

x0, y0, x1, y1 = int(left), 0, int(right), int(min(bottom, page.size[1]))

+ if x0 > x1:

+ x0, x1 = x1, x0

+ if y0 > y1:

+ y0, y1 = y1, y0

+ if x1 <= x0 or y1 <= y0:

+ bottom -= page.size[1]

+ continue

cimgp = page.crop((x0, y0, x1, y1))

imgs.append(cimgp)

if 0 < ii < len(poss) - 1:

@@ -556,7 +577,7 @@ def _transfer_to_sections(self, outputs: list[dict[str, Any]], parse_method: str

case MinerUContentType.DISCARDED:

continue # Skip discarded blocks entirely

- if section and parse_method == "manual":

+ if section and parse_method in {"manual", "pipeline"}:

sections.append((section, output["type"], self._line_tag(output)))

elif section and parse_method == "paper":

sections.append((section + self._line_tag(output), output["type"]))

@@ -582,6 +603,7 @@ def parse_pdf(

) -> tuple:

import shutil

+ self.outlines = extract_pdf_outlines(binary if binary is not None else filepath)

temp_pdf = None

created_tmp_dir = False

diff --git a/deepdoc/parser/paddleocr_parser.py b/deepdoc/parser/paddleocr_parser.py

index 85db63b862d..a23852e89c0 100644

--- a/deepdoc/parser/paddleocr_parser.py

+++ b/deepdoc/parser/paddleocr_parser.py

@@ -36,6 +36,8 @@

class RAGFlowPdfParser:

pass

+from deepdoc.parser.utils import extract_pdf_outlines

+

AlgorithmType = Literal["PaddleOCR-VL"]

SectionTuple = tuple[str, ...]

@@ -59,11 +61,22 @@ def _remove_images_from_markdown(markdown: str) -> str:

return _MARKDOWN_IMAGE_PATTERN.sub("", markdown)

+def _normalize_bbox(bbox: list[Any] | tuple[Any, ...]) -> tuple[float, float, float, float]:

+ if len(bbox) < 4:

+ return 0.0, 0.0, 0.0, 0.0

+

+ left, top, right, bottom = (float(bbox[0]), float(bbox[1]), float(bbox[2]), float(bbox[3]))

+ if left > right:

+ left, right = right, left

+ if top > bottom:

+ top, bottom = bottom, top

+ return left, top, right, bottom

+

+

@dataclass

class PaddleOCRVLConfig:

"""Configuration for PaddleOCR-VL algorithm."""

- use_doc_orientation_classify: Optional[bool] = False

use_doc_orientation_classify: Optional[bool] = False

use_doc_unwarping: Optional[bool] = False

use_layout_detection: Optional[bool] = None

@@ -199,6 +212,7 @@ def __init__(

"""Initialize PaddleOCR parser."""

super().__init__()

+ self.outlines = []

self.api_url = api_url.rstrip("/") if api_url else os.getenv("PADDLEOCR_API_URL", "")

self.access_token = access_token or os.getenv("PADDLEOCR_ACCESS_TOKEN")

self.algorithm = algorithm

@@ -241,6 +255,7 @@ def parse_pdf(

**kwargs: Any,

) -> ParseResult:

"""Parse PDF document using PaddleOCR API."""

+ self.outlines = extract_pdf_outlines(binary if binary is not None else filepath)

# Create configuration - pass all kwargs to capture VL config parameters

config_dict = {

"api_url": api_url if api_url is not None else self.api_url,

@@ -393,10 +408,11 @@ def _transfer_to_sections(self, result: dict[str, Any], algorithm: AlgorithmType

label = block.get("block_label", "")

block_bbox = block.get("block_bbox", [0, 0, 0, 0])

+ left, top, right, bottom = _normalize_bbox(block_bbox)

- tag = f"@@{page_idx + 1}\t{block_bbox[0] // self._ZOOMIN}\t{block_bbox[2] // self._ZOOMIN}\t{block_bbox[1] // self._ZOOMIN}\t{block_bbox[3] // self._ZOOMIN}##"

+ tag = f"@@{page_idx + 1}\t{left // self._ZOOMIN}\t{right // self._ZOOMIN}\t{top // self._ZOOMIN}\t{bottom // self._ZOOMIN}##"

- if parse_method == "manual":

+ if parse_method in {"manual", "pipeline"}:

sections.append((block_content, label, tag))

elif parse_method == "paper":

sections.append((block_content + tag, label))

@@ -409,7 +425,7 @@ def _transfer_to_tables(self, result: dict[str, Any]) -> list[TableTuple]:

"""Convert API response to table tuples."""

return []

- def __images__(self, fnm, page_from=0, page_to=100, callback=None):

+ def __images__(self, fnm, page_from=0, page_to=10**9, callback=None):

"""Generate page images from PDF for cropping."""

self.page_from = page_from

self.page_to = page_to

@@ -509,6 +525,16 @@ def crop(self, text: str, need_position: bool = False):

img0 = self.page_images[pns[0]]

x0, y0, x1, y1 = int(left), int(top), int(right), int(min(bottom, img0.size[1]))

+ if x0 > x1:

+ x0, x1 = x1, x0

+ if y0 > y1:

+ y0, y1 = y1, y0

+ x0 = max(0, min(x0, img0.size[0]))

+ x1 = max(0, min(x1, img0.size[0]))

+ y0 = max(0, min(y0, img0.size[1]))

+ y1 = max(0, min(y1, img0.size[1]))

+ if x1 <= x0 or y1 <= y0:

+ continue

crop0 = img0.crop((x0, y0, x1, y1))

imgs.append(crop0)

if 0 < ii < len(poss) - 1:

@@ -521,6 +547,17 @@ def crop(self, text: str, need_position: bool = False):

continue

page = self.page_images[pn]

x0, y0, x1, y1 = int(left), 0, int(right), int(min(bottom, page.size[1]))

+ if x0 > x1:

+ x0, x1 = x1, x0

+ if y0 > y1:

+ y0, y1 = y1, y0

+ x0 = max(0, min(x0, page.size[0]))

+ x1 = max(0, min(x1, page.size[0]))

+ y0 = max(0, min(y0, page.size[1]))

+ y1 = max(0, min(y1, page.size[1]))

+ if x1 <= x0 or y1 <= y0:

+ bottom -= page.size[1]

+ continue

cimgp = page.crop((x0, y0, x1, y1))

imgs.append(cimgp)

if 0 < ii < len(poss) - 1:

@@ -532,21 +569,25 @@ def crop(self, text: str, need_position: bool = False):

return None, None

return

- height = 0

+ total_height = 0

+ max_width = 0

+ img_sizes = []

for img in imgs:

- height += img.size[1] + GAP

- height = int(height)

- width = int(np.max([i.size[0] for i in imgs]))

- pic = Image.new("RGB", (width, height), (245, 245, 245))

- height = 0

- for ii, img in enumerate(imgs):

- if ii == 0 or ii + 1 == len(imgs):

+ w, h = img.size

+ img_sizes.append((w, h))

+ max_width = max(max_width, w)

+ total_height += h + GAP

+

+ pic = Image.new("RGB", (max_width, int(total_height)), (245, 245, 245))

+ current_height = 0

+ imgs_count = len(imgs)

+ for ii, (img, (w, h)) in enumerate(zip(imgs, img_sizes)):

+ if ii == 0 or ii + 1 == imgs_count:

img = img.convert("RGBA")

- overlay = Image.new("RGBA", img.size, (0, 0, 0, 0))

- overlay.putalpha(128)

+ overlay = Image.new("RGBA", img.size, (0, 0, 0, 128))

img = Image.alpha_composite(img, overlay).convert("RGB")

- pic.paste(img, (0, int(height)))

- height += img.size[1] + GAP

+ pic.paste(img, (0, int(current_height)))

+ current_height += h + GAP

if need_position:

return pic, positions

diff --git a/deepdoc/parser/pdf_parser.py b/deepdoc/parser/pdf_parser.py

index 6681e4a893a..b3a6adec8b5 100644

--- a/deepdoc/parser/pdf_parser.py

+++ b/deepdoc/parser/pdf_parser.py

@@ -22,6 +22,7 @@

import re

import sys

import threading

+import unicodedata

from collections import Counter, defaultdict

from copy import deepcopy

from io import BytesIO

@@ -37,10 +38,10 @@

from sklearn.metrics import silhouette_score

from common.file_utils import get_project_base_directory

-from common.misc_utils import pip_install_torch

from deepdoc.vision import OCR, AscendLayoutRecognizer, LayoutRecognizer, Recognizer, TableStructureRecognizer

from rag.nlp import rag_tokenizer

from rag.prompts.generator import vision_llm_describe_prompt

+from deepdoc.parser.utils import extract_pdf_outlines

from common import settings

@@ -89,14 +90,9 @@ def __init__(self, **kwargs):

self.tbl_det = TableStructureRecognizer()

self.updown_cnt_mdl = xgb.Booster()

- try:

- pip_install_torch()

- import torch.cuda

-

- if torch.cuda.is_available():

- self.updown_cnt_mdl.set_param({"device": "cuda"})

- except Exception:

- logging.info("No torch found.")

+ # xgboost model is very small; using CPU explicitly

+ self.updown_cnt_mdl.set_param({"device": "cpu"})

+ logging.info("updown_cnt_mdl initialized on CPU")

try:

model_dir = os.path.join(get_project_base_directory(), "rag/res/deepdoc")

self.updown_cnt_mdl.load_model(os.path.join(model_dir, "updown_concat_xgb.model"))

@@ -197,6 +193,127 @@ def _has_color(self, o):

return False

return True

+ # CID pattern regex for unmapped font characters from pdfminer

+ _CID_PATTERN = re.compile(r"\(cid\s*:\s*\d+\s*\)")

+

+ @staticmethod

+ def _is_garbled_char(ch):

+ """Check if a single character is garbled (unmappable from PDF font encoding).

+

+ A character is considered garbled if it falls into Unicode Private Use Areas

+ or certain replacement/control character ranges that typically indicate

+ pdfminer failed to map a CID to a valid Unicode codepoint.

+ """

+ if not ch:

+ return False

+ cp = ord(ch)

+ if 0xE000 <= cp <= 0xF8FF:

+ return True

+ if 0xF0000 <= cp <= 0xFFFFF:

+ return True

+ if 0x100000 <= cp <= 0x10FFFF:

+ return True

+ if cp == 0xFFFD:

+ return True

+ if cp < 0x20 and ch not in ('\t', '\n', '\r'):

+ return True

+ if 0x80 <= cp <= 0x9F:

+ return True

+ cat = unicodedata.category(ch)

+ if cat in ("Cn", "Cs"):

+ return True

+ return False

+

+ @staticmethod

+ def _is_garbled_text(text, threshold=0.5):

+ """Check if a text string contains too many garbled characters.

+

+ Examines each character and determines if the overall proportion

+ of garbled characters exceeds the given threshold. Also detects

+ pdfminer's CID placeholder patterns like '(cid:123)'.

+ """

+ if not text or not text.strip():

+ return False

+ if RAGFlowPdfParser._CID_PATTERN.search(text):

+ return True

+ garbled_count = 0

+ total = 0

+ for ch in text:

+ if ch.isspace():

+ continue

+ total += 1

+ if RAGFlowPdfParser._is_garbled_char(ch):

+ garbled_count += 1

+ if total == 0:

+ return False

+ return garbled_count / total >= threshold

+

+ @staticmethod

+ def _has_subset_font_prefix(fontname):

+ """Check if a font name has a subset prefix (e.g. 'DY1+ZLQDm1-1').

+

+ PDF subset fonts use a 6-letter uppercase tag followed by '+' before

+ the actual font name. Some tools use shorter tags (e.g. 'DY1+').

+ """

+ if not fontname:

+ return False

+ return bool(re.match(r"^[A-Z0-9]{2,6}\+", fontname))

+

+ @staticmethod

+ def _is_garbled_by_font_encoding(page_chars, min_chars=20):

+ """Detect garbled text caused by broken font encoding mappings.

+

+ Some PDFs (especially older Chinese standards) embed custom fonts that

+ map CJK glyphs to ASCII codepoints. The extracted text appears as

+ random ASCII punctuation/symbols instead of actual CJK characters.

+

+ Detection strategy: if a significant proportion of characters come from

+ subset-embedded fonts and the page produces overwhelmingly ASCII

+ (punctuation, digits, symbols) with virtually no CJK/Hangul/Kana

+ characters, the page is likely garbled due to broken font encoding.

+ """

+ if not page_chars or len(page_chars) < min_chars:

+ return False

+

+ subset_font_count = 0

+ total_non_space = 0

+ ascii_punct_sym = 0

+ cjk_like = 0

+

+ for c in page_chars:

+ text = c.get("text", "")

+ fontname = c.get("fontname", "")

+ if not text or text.isspace():

+ continue

+ total_non_space += 1

+

+ if RAGFlowPdfParser._has_subset_font_prefix(fontname):

+ subset_font_count += 1

+

+ cp = ord(text[0])

+ if (0x2E80 <= cp <= 0x9FFF or 0xF900 <= cp <= 0xFAFF

+ or 0x20000 <= cp <= 0x2FA1F

+ or 0xAC00 <= cp <= 0xD7AF

+ or 0x3040 <= cp <= 0x30FF):

+ cjk_like += 1

+ elif (0x21 <= cp <= 0x2F or 0x3A <= cp <= 0x40

+ or 0x5B <= cp <= 0x60 or 0x7B <= cp <= 0x7E):

+ ascii_punct_sym += 1

+

+ if total_non_space < min_chars:

+ return False

+

+ subset_ratio = subset_font_count / total_non_space

+ if subset_ratio < 0.3:

+ return False

+

+ cjk_ratio = cjk_like / total_non_space

+ punct_ratio = ascii_punct_sym / total_non_space

+ if cjk_ratio < 0.05 and punct_ratio > 0.4:

+ return True

+

+ return False

+

def _evaluate_table_orientation(self, table_img, sample_ratio=0.3):

"""

Evaluate the best rotation orientation for a table image.

@@ -585,7 +702,7 @@ def _insert_ocr_boxes(ocr_results, page_index, table_x0, table_top, insert_at, t

def __ocr(self, pagenum, img, chars, ZM=3, device_id: int | None = None):

start = timer()

bxs = self.ocr.detect(np.array(img), device_id)

- logging.info(f"__ocr detecting boxes of a image cost ({timer() - start}s)")

+ logging.info(f"__ocr detecting boxes of an image cost ({timer() - start}s)")

start = timer()

if not bxs:

@@ -618,14 +735,40 @@ def __ocr(self, pagenum, img, chars, ZM=3, device_id: int | None = None):

if not b["chars"]:

del b["chars"]

continue

- m_ht = np.mean([c["height"] for c in b["chars"]])

- for c in Recognizer.sort_Y_firstly(b["chars"], m_ht):

+ box_chars = b["chars"]

+ m_ht = np.mean([c["height"] for c in box_chars])

+ garbled_count = 0

+ total_count = 0

+ for c in Recognizer.sort_Y_firstly(box_chars, m_ht):

if c["text"] == " " and b["text"]:

if re.match(r"[0-9a-zA-Zа-яА-Я,.?;:!%%]", b["text"][-1]):

b["text"] += " "

else:

b["text"] += c["text"]

+ for ch in c["text"]:

+ if not ch.isspace():

+ total_count += 1

+ if self._is_garbled_char(ch):

+ garbled_count += 1

del b["chars"]

+ # If the majority of characters from pdfplumber are garbled,

+ # clear the text so OCR recognition will be used as fallback.

+ # Strategy 1: PUA / unmapped CID characters

+ if total_count > 0 and garbled_count / total_count >= 0.5:

+ logging.info(

+ "Page %d: detected garbled pdfplumber text (garbled=%d/%d), falling back to OCR for box at (%.1f, %.1f)",

+ pagenum, garbled_count, total_count, b["x0"], b["top"],

+ )

+ b["text"] = ""

+ continue

+ # Strategy 2: font-encoding garbling — all chars are ASCII

+ # punctuation from subset fonts (no CJK output)

+ if total_count > 0 and self._is_garbled_by_font_encoding(box_chars, min_chars=5):

+ logging.info(

+ "Page %d: detected font-encoding garbled text (%d chars), falling back to OCR for box at (%.1f, %.1f)",

+ pagenum, total_count, b["x0"], b["top"],

+ )

+ b["text"] = ""

logging.info(f"__ocr sorting {len(chars)} chars cost {timer() - start}s")

start = timer()

@@ -1400,34 +1543,40 @@ def __images__(self, fnm, zoomin=3, page_from=0, page_to=299, callback=None):

logging.warning(f"Failed to extract characters for pages {page_from}-{page_to}: {str(e)}")

self.page_chars = [[] for _ in range(page_to - page_from)] # If failed to extract, using empty list instead.

+ # Detect garbled pages and clear their chars so the OCR

+ # path will be used instead. Two detection strategies:

+ # 1) PUA / unmapped CID characters (threshold=0.3)

+ # 2) Font-encoding garbling: subset fonts mapping CJK to ASCII

+ for pi, page_ch in enumerate(self.page_chars):

+ if not page_ch:

+ continue

+ # Strategy 1: PUA / CID garbling

+ sample = page_ch if len(page_ch) <= 200 else page_ch[:200]

+ sample_text = "".join(c.get("text", "") for c in sample)

+ if self._is_garbled_text(sample_text, threshold=0.3):

+ logging.warning(

+ "Page %d: pdfplumber extracted mostly garbled characters (%d chars), "

+ "clearing to use OCR fallback.",

+ page_from + pi + 1, len(page_ch),

+ )

+ self.page_chars[pi] = []

+ continue

+ # Strategy 2: font-encoding garbling (CJK mapped to ASCII)

+ if self._is_garbled_by_font_encoding(page_ch):

+ logging.warning(

+ "Page %d: detected font-encoding garbled text "

+ "(subset fonts with no CJK output, %d chars), "

+ "clearing to use OCR fallback.",

+ page_from + pi + 1, len(page_ch),

+ )

+ self.page_chars[pi] = []

+

self.total_page = len(self.pdf.pages)

except Exception as e:

logging.exception(f"RAGFlowPdfParser __images__, exception: {e}")

logging.info(f"__images__ dedupe_chars cost {timer() - start}s")

- self.outlines = []

- try:

- with pdf2_read(fnm if isinstance(fnm, str) else BytesIO(fnm)) as pdf:

- self.pdf = pdf

-

- outlines = self.pdf.outline

-

- def dfs(arr, depth):

- for a in arr:

- if isinstance(a, dict):

- self.outlines.append((a["/Title"], depth))

- continue

- dfs(a, depth + 1)

-

- dfs(outlines, 0)

-

- except Exception as e:

- logging.warning(f"Outlines exception: {e}")

-

- if not self.outlines:

- logging.warning("Miss outlines")

-

logging.debug("Images converted.")

self.is_english = [

re.search(r"[ a-zA-Z0-9,/¸;:'\[\]\(\)!@#$%^&*\"?<>._-]{30,}", "".join(random.choices([c["text"] for c in self.page_chars[i]], k=min(100, len(self.page_chars[i])))))

@@ -1535,6 +1684,7 @@ def __call__(self, fnm, need_image=True, zoomin=3, return_html=False, auto_rotat

if auto_rotate_tables is None:

auto_rotate_tables = os.getenv("TABLE_AUTO_ROTATE", "true").lower() in ("true", "1", "yes")

+ self.outlines = extract_pdf_outlines(fnm)

self.__images__(fnm, zoomin)

self._layouts_rec(zoomin)

self._table_transformer_job(zoomin, auto_rotate=auto_rotate_tables)

@@ -1546,6 +1696,7 @@ def __call__(self, fnm, need_image=True, zoomin=3, return_html=False, auto_rotat

def parse_into_bboxes(self, fnm, callback=None, zoomin=3):

start = timer()

+ self.outlines = extract_pdf_outlines(fnm)

self.__images__(fnm, zoomin, callback=callback)

if callback:

callback(0.40, "OCR finished ({:.2f}s)".format(timer() - start))

@@ -1594,19 +1745,41 @@ def min_rectangle_distance(rect1, rect2):

return math.sqrt(dx * dx + dy * dy) # + (pn2-pn1)*10000

for (img, txt), poss in tbls_or_figs:

- bboxes = [(i, (b["page_number"], b["x0"], b["x1"], b["top"], b["bottom"])) for i, b in enumerate(self.boxes)]

- dists = [

- (min_rectangle_distance((pn, left, right, top + self.page_cum_height[pn], bott + self.page_cum_height[pn]), rect), i) for i, rect in bboxes for pn, left, right, top, bott in poss

- ]

- min_i = np.argmin(dists, axis=0)[0]

- min_i, rect = bboxes[dists[min_i][-1]]

+ # Positions coming from _extract_table_figure carry absolute 0-based page

+ # indices (page_from offset). Convert back to chunk-local indices so we

+ # stay consistent with self.boxes/page_cum_height, which are all relative

+ # to the current parsing window.

+ local_poss = []

+ for pn, left, right, top, bott in poss:

+ local_pn = pn - self.page_from

+ if 0 <= local_pn < len(self.page_cum_height) - 1:

+ local_poss.append((local_pn, left, right, top, bott))

+ else:

+ logging.debug(f"Skip out-of-range table/figure position pn={pn}, page_from={self.page_from}")

+ if not local_poss:

+ logging.debug("No valid local positions for table/figure; skip insertion.")

+ continue

+

if isinstance(txt, list):

txt = "\n".join(txt)

- pn, left, right, top, bott = poss[0]

- if self.boxes[min_i]["bottom"] < top + self.page_cum_height[pn]:

- min_i += 1

+ pn, left, right, top, bott = local_poss[0]

+ insert_at = len(self.boxes)

+ bboxes = [(i, (b["page_number"], b["x0"], b["x1"], b["top"], b["bottom"])) for i, b in enumerate(self.boxes)]

+ if bboxes:

+ dists = [

+ (min_rectangle_distance((cand_pn, cand_left, cand_right, cand_top + self.page_cum_height[cand_pn], cand_bott + self.page_cum_height[cand_pn]), rect), i)

+ for i, rect in bboxes

+ for cand_pn, cand_left, cand_right, cand_top, cand_bott in local_poss

+ ]

+ if dists:

+ nearest_bbox_idx = int(np.argmin([dist for dist, _ in dists]))

+ insert_at, _ = bboxes[dists[nearest_bbox_idx][-1]]

+ if self.boxes[insert_at]["bottom"] < top + self.page_cum_height[pn]:

+ insert_at += 1

+ else:

+ logging.debug("No text boxes available; append %s block directly.", layout_type)

self.boxes.insert(

- min_i,

+ insert_at,

{

"page_number": pn + 1,

"x0": left,

@@ -1771,27 +1944,14 @@ def get_position(self, bx, ZM):

class PlainParser:

def __call__(self, filename, from_page=0, to_page=100000, **kwargs):

- self.outlines = []

lines = []

try:

self.pdf = pdf2_read(filename if isinstance(filename, str) else BytesIO(filename))

for page in self.pdf.pages[from_page:to_page]:

lines.extend([t for t in page.extract_text().split("\n")])

-

- outlines = self.pdf.outline

-

- def dfs(arr, depth):

- for a in arr:

- if isinstance(a, dict):

- self.outlines.append((a["/Title"], depth))

- continue

- dfs(a, depth + 1)

-

- dfs(outlines, 0)

except Exception:

logging.exception("Outlines exception")

- if not self.outlines:

- logging.warning("Miss outlines")

+ self.outlines = extract_pdf_outlines(filename)

return [(line, "") for line in lines], []

diff --git a/deepdoc/parser/resume/entities/corporations.py b/deepdoc/parser/resume/entities/corporations.py

index 0396281deed..50359673032 100644

--- a/deepdoc/parser/resume/entities/corporations.py

+++ b/deepdoc/parser/resume/entities/corporations.py

@@ -29,11 +29,12 @@

).fillna(0)

GOODS["cid"] = GOODS["cid"].astype(str)

GOODS = GOODS.set_index(["cid"])

-CORP_TKS = json.load(

- open(os.path.join(current_file_path, "res/corp.tks.freq.json"), "r",encoding="utf-8")

-)

-GOOD_CORP = json.load(open(os.path.join(current_file_path, "res/good_corp.json"), "r",encoding="utf-8"))

-CORP_TAG = json.load(open(os.path.join(current_file_path, "res/corp_tag.json"), "r",encoding="utf-8"))

+with open(os.path.join(current_file_path, "res/corp.tks.freq.json"), "r", encoding="utf-8") as f:

+ CORP_TKS = json.load(f)

+with open(os.path.join(current_file_path, "res/good_corp.json"), "r", encoding="utf-8") as f:

+ GOOD_CORP = json.load(f)

+with open(os.path.join(current_file_path, "res/corp_tag.json"), "r", encoding="utf-8") as f:

+ CORP_TAG = json.load(f)

def baike(cid, default_v=0):

diff --git a/deepdoc/parser/resume/entities/schools.py b/deepdoc/parser/resume/entities/schools.py

index 4425236beb1..5763ca48be5 100644

--- a/deepdoc/parser/resume/entities/schools.py

+++ b/deepdoc/parser/resume/entities/schools.py

@@ -25,7 +25,8 @@

os.path.join(current_file_path, "res/schools.csv"), sep="\t", header=0

).fillna("")

TBL["name_en"] = TBL["name_en"].map(lambda x: x.lower().strip())

-GOOD_SCH = json.load(open(os.path.join(current_file_path, "res/good_sch.json"), "r",encoding="utf-8"))

+with open(os.path.join(current_file_path, "res/good_sch.json"), "r", encoding="utf-8") as f:

+ GOOD_SCH = json.load(f)

GOOD_SCH = set([re.sub(r"[,. &()()]+", "", c) for c in GOOD_SCH])

diff --git a/deepdoc/parser/tcadp_parser.py b/deepdoc/parser/tcadp_parser.py

index af1c9034895..6a37f0befd0 100644

--- a/deepdoc/parser/tcadp_parser.py

+++ b/deepdoc/parser/tcadp_parser.py

@@ -39,6 +39,7 @@

from common.config_utils import get_base_config

from deepdoc.parser.pdf_parser import RAGFlowPdfParser

+from deepdoc.parser.utils import extract_pdf_outlines

class TencentCloudAPIClient:

@@ -392,6 +393,7 @@ def parse_pdf(

) -> tuple:

"""Parse PDF document"""

+ self.outlines = extract_pdf_outlines(binary if binary else filepath)

temp_file = None

created_tmp_dir = False

diff --git a/deepdoc/parser/txt_parser.py b/deepdoc/parser/txt_parser.py

index 64e200cbc66..6abf8591da8 100644

--- a/deepdoc/parser/txt_parser.py

+++ b/deepdoc/parser/txt_parser.py

@@ -40,7 +40,10 @@ def add_chunk(t):

cks.append(t)

tk_nums.append(tnum)

else:

- cks[-1] += t

+ if cks[-1]:

+ cks[-1] += "\n" + t

+ else:

+ cks[-1] += t

tk_nums[-1] += tnum

dels = []

diff --git a/deepdoc/parser/utils.py b/deepdoc/parser/utils.py

index 85a3554955b..b36af08fa59 100644

--- a/deepdoc/parser/utils.py

+++ b/deepdoc/parser/utils.py

@@ -14,12 +14,16 @@

# limitations under the License.

#

+from io import BytesIO

+

+from pypdf import PdfReader as pdf2_read

+

from rag.nlp import find_codec

def get_text(fnm: str, binary=None) -> str:

txt = ""

- if binary:

+ if binary is not None:

encoding = find_codec(binary)

txt = binary.decode(encoding, errors="ignore")

else:

@@ -30,3 +34,21 @@ def get_text(fnm: str, binary=None) -> str:

break

txt += line

return txt

+

+

+def extract_pdf_outlines(source):

+ try:

+ with pdf2_read(source if isinstance(source, str) else BytesIO(source)) as pdf:

+ outlines = []

+

+ def dfs(nodes, depth):

+ for node in nodes:

+ if isinstance(node, list):

+ dfs(node, depth + 1)

+ else:

+ outlines.append((node["/Title"], depth, pdf.get_destination_page_number(node) + 1))

+

+ dfs(pdf.outline, 0)

+ return outlines

+ except Exception:

+ return []

diff --git a/deepdoc/vision/__init__.py b/deepdoc/vision/__init__.py

index 6b88b792d6b..8d6c6c398a2 100644

--- a/deepdoc/vision/__init__.py

+++ b/deepdoc/vision/__init__.py

@@ -60,9 +60,8 @@ def images_and_outputs(fnm):

pdf_pages(fnm)

return

try:

- fp = open(fnm, "rb")

- binary = fp.read()

- fp.close()

+ with open(fnm, "rb") as fp:

+ binary = fp.read()

images.append(Image.open(io.BytesIO(binary)).convert("RGB"))

outputs.append(os.path.split(fnm)[-1])

except Exception:

diff --git a/deepdoc/vision/layout_recognizer.py b/deepdoc/vision/layout_recognizer.py

index 5b79e2bf5c6..be1f8667cec 100644

--- a/deepdoc/vision/layout_recognizer.py

+++ b/deepdoc/vision/layout_recognizer.py

@@ -17,7 +17,7 @@

import logging

import math

import os

-# import re

+import re

from collections import Counter

from copy import deepcopy

@@ -62,9 +62,8 @@ def __init__(self, domain):

def __call__(self, image_list, ocr_res, scale_factor=3, thr=0.2, batch_size=16, drop=True):

def __is_garbage(b):

- return False

- # patt = [r"^•+$", "^[0-9]{1,2} / ?[0-9]{1,2}$", r"^[0-9]{1,2} of [0-9]{1,2}$", "^http://[^ ]{12,}", "\\(cid *: *[0-9]+ *\\)"]

- # return any([re.search(p, b["text"]) for p in patt])

+ patt = [r"\(cid\s*:\s*\d+\s*\)"]

+ return any([re.search(p, b.get("text", "")) for p in patt])

if self.client:

layouts = self.client.predict(image_list)

diff --git a/deepdoc/vision/ocr.py b/deepdoc/vision/ocr.py

index 1f573bda595..d5e546a3c59 100644

--- a/deepdoc/vision/ocr.py

+++ b/deepdoc/vision/ocr.py

@@ -670,19 +670,13 @@ def detect(self, img, device_id: int | None = None):

if device_id is None:

device_id = 0

- time_dict = {'det': 0, 'rec': 0, 'cls': 0, 'all': 0}

-

if img is None:

- return None, None, time_dict

+ return None

- start = time.time()

- dt_boxes, elapse = self.text_detector[device_id](img)

- time_dict['det'] = elapse

+ dt_boxes, _ = self.text_detector[device_id](img)

if dt_boxes is None:

- end = time.time()

- time_dict['all'] = end - start

- return None, None, time_dict

+ return None

return zip(self.sorted_boxes(dt_boxes), [

("", 0) for _ in range(len(dt_boxes))])

diff --git a/deepdoc/vision/operators.py b/deepdoc/vision/operators.py

index 65d2efa4cb0..43b55ccd3a9 100644

--- a/deepdoc/vision/operators.py

+++ b/deepdoc/vision/operators.py

@@ -22,6 +22,7 @@

import numpy as np

import math

from PIL import Image

+from rag.utils.lazy_image import ensure_pil_image

class DecodeImage:

@@ -128,8 +129,9 @@ def __init__(self, scale=None, mean=None, std=None, order='chw', **kwargs):

def __call__(self, data):

img = data['image']

from PIL import Image

- if isinstance(img, Image.Image):

- img = np.array(img)

+ pil = ensure_pil_image(img)

+ if isinstance(pil, Image.Image):

+ img = np.array(pil)

assert isinstance(img,

np.ndarray), "invalid input 'img' in NormalizeImage"

data['image'] = (

@@ -147,8 +149,9 @@ def __init__(self, **kwargs):

def __call__(self, data):

img = data['image']

from PIL import Image

- if isinstance(img, Image.Image):

- img = np.array(img)

+ pil = ensure_pil_image(img)

+ if isinstance(pil, Image.Image):

+ img = np.array(pil)

data['image'] = img.transpose((2, 0, 1))

return data

diff --git a/deepdoc/vision/table_structure_recognizer.py b/deepdoc/vision/table_structure_recognizer.py

index 0cd762576c1..e0892c2d720 100644

--- a/deepdoc/vision/table_structure_recognizer.py

+++ b/deepdoc/vision/table_structure_recognizer.py

@@ -394,7 +394,7 @@ def __html_table(cap, hdset, tbl):

@staticmethod

def __desc_table(cap, hdr_rowno, tbl, is_english):

- # get text of every colomn in header row to become header text

+ # get text of every column in header row to become header text

clmno = len(tbl[0])

rowno = len(tbl)

headers = {}

diff --git a/docker/.env b/docker/.env

index 7e1bdf801bc..9fdf4e3ea1f 100644

--- a/docker/.env

+++ b/docker/.env

@@ -28,7 +28,7 @@ DEVICE=${DEVICE:-cpu}

COMPOSE_PROFILES=${DOC_ENGINE},${DEVICE}

# The version of Elasticsearch.

-STACK_VERSION=8.11.3

+STACK_VERSION=${STACK_VERSION:-8.11.3}

# The hostname where the Elasticsearch service is exposed

ES_HOST=es01

@@ -118,7 +118,7 @@ MYSQL_DBNAME=rag_flow

MYSQL_PORT=3306

# The port used to expose the MySQL service to the host machine,

# allowing EXTERNAL access to the MySQL database running inside the Docker container.

-EXPOSE_MYSQL_PORT=5455

+EXPOSE_MYSQL_PORT=3306

# The maximum size of communication packets sent to the MySQL server

MYSQL_MAX_PACKET=1073741824

@@ -152,13 +152,18 @@ SVR_WEB_HTTPS_PORT=443

SVR_HTTP_PORT=9380

ADMIN_SVR_HTTP_PORT=9381

SVR_MCP_PORT=9382

+GO_HTTP_PORT=9384

+GO_ADMIN_PORT=9383

+

+# API_PROXY_SCHEME=hybrid # go and python hybrid deploy mode

+API_PROXY_SCHEME=python # use pure python server deployment

# The RAGFlow Docker image to download. v0.22+ doesn't include embedding models.

-RAGFLOW_IMAGE=infiniflow/ragflow:v0.24.0

+RAGFLOW_IMAGE=infiniflow/ragflow:latest

# If you cannot download the RAGFlow Docker image:

-# RAGFLOW_IMAGE=swr.cn-north-4.myhuaweicloud.com/infiniflow/ragflow:v0.24.0

-# RAGFLOW_IMAGE=registry.cn-hangzhou.aliyuncs.com/infiniflow/ragflow:v0.24.0

+# RAGFLOW_IMAGE=swr.cn-north-4.myhuaweicloud.com/infiniflow/ragflow:v0.25.0

+# RAGFLOW_IMAGE=registry.cn-hangzhou.aliyuncs.com/infiniflow/ragflow:v0.25.0

#

# - For the `nightly` edition, uncomment either of the following:

# RAGFLOW_IMAGE=swr.cn-north-4.myhuaweicloud.com/infiniflow/ragflow:nightly

@@ -256,6 +261,10 @@ REGISTER_ENABLED=1

# SANDBOX_ENABLE_SECCOMP=false

# SANDBOX_MAX_MEMORY=256m # b, k, m, g

# SANDBOX_TIMEOUT=10s # s, m, 1m30s

+# The MinIO bucket name for storing sandbox-generated artifacts (charts, files, etc.).

+SANDBOX_ARTIFACT_BUCKET=sandbox-artifacts

+# Number of days before sandbox artifacts are automatically deleted from storage.

+SANDBOX_ARTIFACT_EXPIRE_DAYS=7

# Enable DocLing

USE_DOCLING=false

@@ -276,4 +285,7 @@ DOTNET_SYSTEM_GLOBALIZATION_INVARIANT=1

# Used for ThreadPoolExecutor

-THREAD_POOL_MAX_WORKERS=128

\ No newline at end of file

+THREAD_POOL_MAX_WORKERS=128

+

+#Option to disable login form for SSO

+DISABLE_PASSWORD_LOGIN=false

diff --git a/docker/README.md b/docker/README.md

index c6422bad8c7..b2a9b2fd70e 100644

--- a/docker/README.md

+++ b/docker/README.md

@@ -79,7 +79,7 @@ The [.env](./.env) file contains important environment variables for Docker.

- `SVR_HTTP_PORT`

The port used to expose RAGFlow's HTTP API service to the host machine, allowing **external** access to the service running inside the Docker container. Defaults to `9380`.

- `RAGFLOW-IMAGE`

- The Docker image edition. Defaults to `infiniflow/ragflow:v0.24.0`. The RAGFlow Docker image does not include embedding models.

+ The Docker image edition. Defaults to `infiniflow/ragflow:v0.25.0`. The RAGFlow Docker image does not include embedding models.

> [!TIP]

diff --git a/docker/docker-compose-base.yml b/docker/docker-compose-base.yml

index f82f8027333..1030136bb5e 100644

--- a/docker/docker-compose-base.yml

+++ b/docker/docker-compose-base.yml

@@ -36,7 +36,7 @@ services:

opensearch01:

profiles:

- opensearch

- image: hub.icert.top/opensearchproject/opensearch:2.19.1

+ image: opensearchproject/opensearch:2.19.1

volumes:

- osdata01:/usr/share/opensearch/data

ports:

@@ -72,7 +72,7 @@ services:

infinity:

profiles:

- infinity

- image: infiniflow/infinity:v0.7.0-dev2

+ image: infiniflow/infinity:v0.7.0-dev5

volumes:

- infinity_data:/var/infinity

- ./infinity_conf.toml:/infinity_conf.toml

@@ -202,7 +202,7 @@ services:

restart: unless-stopped

minio:

- image: quay.io/minio/minio:RELEASE.2025-06-13T11-33-47Z

+ image: pgsty/minio:RELEASE.2026-03-25T00-00-00Z

command: ["server", "--console-address", ":9001", "/data"]

ports:

- ${MINIO_PORT}:9000

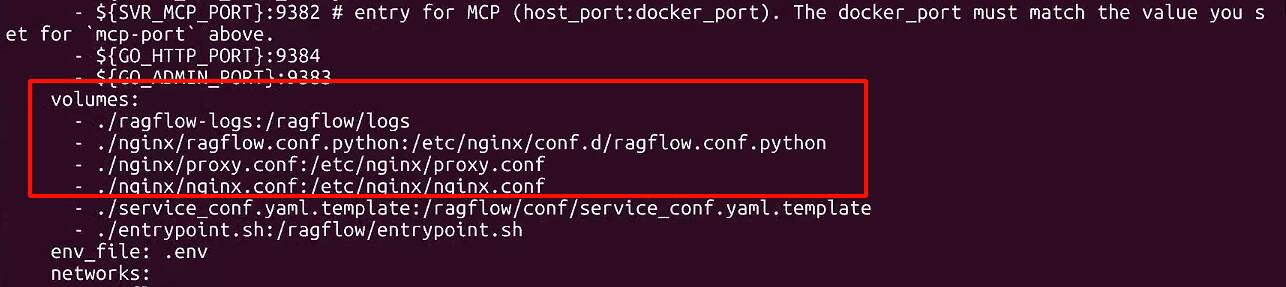

diff --git a/docker/docker-compose.yml b/docker/docker-compose.yml

index a32c2b609ef..6eba5825d6c 100644

--- a/docker/docker-compose.yml

+++ b/docker/docker-compose.yml

@@ -34,11 +34,13 @@ services:

- ${SVR_HTTP_PORT}:9380

- ${ADMIN_SVR_HTTP_PORT}:9381

- ${SVR_MCP_PORT}:9382 # entry for MCP (host_port:docker_port). The docker_port must match the value you set for `mcp-port` above.

+ - ${GO_HTTP_PORT}:9384

+ - ${GO_ADMIN_PORT}:9383

volumes:

- ./ragflow-logs:/ragflow/logs

- - ./nginx/ragflow.conf:/etc/nginx/conf.d/ragflow.conf

- - ./nginx/proxy.conf:/etc/nginx/proxy.conf

- - ./nginx/nginx.conf:/etc/nginx/nginx.conf

+ # - ./nginx/ragflow.conf:/etc/nginx/conf.d/ragflow.conf

+ # - ./nginx/proxy.conf:/etc/nginx/proxy.conf